Perspectives on Reality, Knowledge, and

Science

Formerly

Reflections on Mathematics as a foundation

for Reality

12/16/11

RAH

Science wars dvd/notes

has good input here, especially on historicity of science

Reality and

Knowledge of the Universe in Western Science

(Consciousness addressed in

separate papers)

Reality

“What is

reality” is a perennial

question, and to assume this question is irrelevant only means we

subscribe to a particular philosophy of reality.

Sociologist Pitiram

Sorokin sees “systems of truth” as a socially agreed upon construct. In his

classic Social and Cultural Dynamics[1] he postulates that whole

cultures alternate cyclically between a sensate mentality,

which perceives only the material, sensate world as real, and ideational mentality,

which perceives that reality lies beyond the natural material world. He also

identifies an integrated idealistic mentality, which perceives that reality has

both sensory and supersensory aspects. He finds that the reason and logic of “rationalistic” philosophy can provide

such synthesis.

He sees these

three mentalities reflected in the ancient Roman, medieval, and renaissance

periods respectively.

Ideational and sensate values can

be traced back at least to classical Greek civilization, with the ideational

philosophy of Plato on the one hand, and the sensate

philosophy of Aristotle on the other. [2]

Sorokin believes that we are coming to the end of a six-hundred-year-long Sensate day, and that the transition to an Ideational period will be tumultuous.[3]

Knowledge

Professor Steven Goldman sees a conflict in western philosophy between two views of the nature of knowledge. One view sees Knowledge as universal, absolute, and certain. The other view sees knowledge as particular, relative, and probabalistic; as in a type of belief.

This

conflict was expressed in Plato’s dialogue The Sophist as a war between the gods, who espoused Knowledge, and the earth giants, whom Plato

identified as the Sophists, who espoused

knowledge.

Plato

pointed to mathematics as a body of Knowledge that was universal necessary and

certain.

(Aristotle,

the father of natural philosophy and western science, emphasized the importance

of observation, or experience as the key to knowledge.)

The

battle between the Plato and the sophists rages on today, between science and

contemporary postmodern philosophy.

Western Science

From a sensate

perspective, science would be considered a valid tool in the study of

“reality”. From an ideational perspective, metaphysics would be valid.

Goldman

notes that “modern science” inherited several principles from the

medieval study of natural

philosophy: natural phenomenon should be explained in terms of

natural causes; knowledge of nature must be gained by direct experience or

experiment, and mathematics may be useful.

He

makes the case that combination of the (Aristotelian) principle of direct

observation or experience and the (Platonic) principle of the universality of mathematics

introduced an ambivalence or internal conflict in science. [4]

Initially

there was no indication of any conflict. It was only when the two began to

diverge, beginning certainly with the Copernican controversy,

that the scientific community began to become aware of the conflict.

The Copernican “paradigm shift”

The

term "Paradigm Shift" was introduced by Thomas Kuhn, science

historian and philosopher, in his 1962 book, The Structure of Scientific

Revolutions. According to Kuhn a

“Paradigm Shift” is a distinctively new way for a society to think about

“reality”. A valid scientific idea may exist for years, without a corresponding

societal paradigm shift.

Belief in the literal truth of the Copernican concept of the earth revolving about the sun rather than vice versa is often given as an example of a paradigm shift. [5]

Initial

western ideas about planetary motion were based on Plato’s notion of the

perfection of the mathematical circle. In support of Plato’s notion, models were

developed which described celestial planetary motion in terms of perfect

circles by use of epicycles.

By use

of epicycles, the Ptolemaic system accounted for retrograde planetary motion,

but did not account for the observed changes in the phases of the inner planets,

Mercury and Venus.

Copernicus

was able to rid himself of the long-held notion that the Earth was the center

of the Solar system, but he did not question the assumption of uniform circular

motion. Thus, the Copernican model still could not explain all the details of

planetary motion on the celestial sphere without epicycles. However, the

Copernican system required many fewer epicycles than the Ptolemaic system

because it moved the Sun to the center. [6]

Scholars

most likely did not believe epicycles were actually in the natural world [7],

but considered them a “model” giving a more accurate description of the

positions of the planets in the sky.

The

Copernican system gave an accurate description of the positions of the planets,

including correct prediction of the phases of the inner planets. It also

reduced the need for epicycles, the relative simplicity of which has often been

assumed to imply correctness. (This view has been challenged.

[8])

However,

the Tychonic geo-helio

centric system was also a viable option, and was at the time observationally

indistinguishable from the

Copernican heliocentric system. [9]

As there was no clear proof of how the planets “really” moved, the Copernican

and Tychonic systems were both legitimately still seen as alternative models. [10]

Still, Galileo, who wrote “The Book of Nature

is written in the language of mathematics,”

argued for the Truth of

the Copernican model, [11]

but the Church

had a point: how could Galileo KNOW the Truth?

Though

contrary to experience, in time, and with additional confirmation, as put

forward by Kepler [12]

and others, the

Copernican mathematical theory became accepted. [13]

What

appears to have changed

was the increasing credibility of mathematics as not only modeling the

world, but representing the reality of it

in the face of apparently contradictory evidence of every day experience.

The seductiveness of math

Up to a certain

point, mathematics clearly brings insight into our understanding of the

physical world.

Correspondence Between Math and Physical

Patterns

Following the lead taken by natural philosophy, scientists

in the 20th century acknowledged the important role of mathematics.

In 1960, Eugene

Wigner wrote in his book The Unreasonable Effectiveness of Mathematics in

the Natural Sciences, that amazingly, when physicists pick a pattern from

mathematics to represent patterns in the

natural world, the mathematical pattern often fits nature with amazing accuracy.

Mathematics developed for one particular physical

application often turn out to be applicable to other

physical applications. For example, trigonometry, originally developed in the

study of astronomy, finds application in the modeling of a vibrating spring,

heat flow, and electromagnetism.

Electromagnetic radiation is transmitted by sinusoidal

waveforms. Trigonometric functions are solutions to James Clerk

Maxwell’s equations of electromagnetism. [14]

Math

Leads to New Physical Insight

The correspondence between

mathematics and “reality” may result in new mathematics as

well as deeper insight into “reality”.

It was found that

Physical Analogs

Correspondence

between the mathematical description of different

physical systems was discovered.

Maxwell developed his equations from a series of iterations, starting initially with mechanical analogs. These mechanical analogs predicted two new phenomenon: a new type of current, which would arise whenever the electric field changes (displacement current), and the transverse character of EM waves, because the changing electric and magnetic fields were both at right angles to the direction of wave propagation. [16]

In his next iteration he suspected that the ultimate mechanisms of nature might be beyond our comprehension, so he set his mechanical model aside and chose to apply Lagrange’s method of treating the system like a black box: If you know the inputs and the systems general characteristics, you can calculate the outputs without knowledge of the internal mechanism. His first assumption is that EM fields hold energy, both kinetic and potential. Electromotive and magnetomotive forces are not forces in mechanical sense, but act in an analogous way. The result of his new approach was vector calculus.

At a very practical level, systems, or

circuits, of electrical, mechanical, fluid and thermal elements are governed by

the same differential equations, and the elements of these systems have

analogous math descriptions. The electrical analog of mechanical, fluid, and

thermal systems is

the basis for the analog computer. [17]

Symmetry

Symmetry is an

important concept in mathematical physics.

When Maxwell

initially wove the equations of electricity and magnetism together, he thought they

looked unbalanced. He therefore added an equation to make the equations more

symmetric. The extra term could be interpreted as creation of a magnetic field

by varying an electric field. This turned out to actually exist. Inclusion of

the second term allowed trigonometric functions to be solutions to the

equations, or electromagnetic waves.

In the broadest

terms, symmetry exists when something remains unchanged during a

mathematical operation.

Even though the

mathematical symmetries may be hard, or even impossible to visualize

physically, they can point the way to new principles in nature. Searching for

undiscovered symmetries has thus become a major tool of modern physics. [18]

The

Great Schism

Beyond

a certain point, being the very small and the very large, it became obvious

historically that deduction and direct observation were no longer reconcilable with

what the logic of mathematics tells us about the natural world,

and in many cases mathematics cannot be “checked” to see if it indeed gives us

the “correct” answer.

There are

several perspectives:

For

some, the direction of math in the sciences led away from a sensate description

of all reality; a dissolution of the sensate world, based on the dissolution of

reality at the scale of the very small.

For

others, such as advocates of the

Others

questioned the claim that mathematics was telling us about “reality” at all at

the scale of the very large or small.

Still

others questioned the claim that the logic of science could tell us anything

about reality in even our macroscopic everyday world.

The

result has been not only uncertainty and disputes within the scientific community [19]

, but also a backlash hostility toward

science in a significant portion of the non-scientific community.

Theory of Everything (TOE)

Physicists now

believe that all forces exist simply to enable nature to maintain a set of

abstract symmetries.

They have

come to understand that the known universe is governed by the four mathematically expressed forces of gravity,

electromagnetism (EM), and the weak and strong nuclear forces. The strong

nuclear force holds the protons and neutrons of the nucleus together; the weak

nuclear force allows neutrons to turn into protons, giving off radiation in the

process. The atomic bomb releases the power of the strong nuclear force. Physicists

since Einstein have been trying to understand gravity, and to reduce the

expressions for the four forces of the universe to a single equation. [20]

Einstein’s General Theory, today’s

standard theory

of gravity, deals with large spaces and demands smooth variations in space

time. Currently science has no equations that can be used to describe something that is both very

massive, where

normally the General Theory would apply,

and very small, where normally quantum mechanics would apply. [21]

The search is on to develop the

Theory of Everything (TOE),

and some think a primary contender is the next iteration in

particle physics: “String,” or “Super

String” theory.

In 1967, Murray Gell-Mann was lecturing on the striking regularities in data pertaining to the collisions of protons and neutrons. An Italian grad student, Gabriele Veneziano, became intrigued, and found a simple math function that would describe the regularities. Why this function worked was presented in 1970 in the work of Leonard Susskind and Yoichiro Nambu. They found that Veneziano’s mathematical function would arise from the underlying theory if you modeled the protons and neutrons not as points, but as tiny vibrating strings. [22]

In 1984, John Schwarz and Michael Green resolved the last major inconsistency in string theory. This did not make the theory any easier to solve, but it convinced many leading physicists- especially Edward Witten- that the math based theory had too many miraculous properties to ignore. String theory then jumped from laughingstock to hottest thing in physics. [23]

Edward Witten showed that the

original 5 different versions of string theory were merely different

perspectives on the same thing. His mathematical theory, called “M” theory, requires 11

dimensions, and also predicts multiple universes, [24]

all quite inconsistent with our observed reality.

What is so alluring about String

Theory? Its mathematical elegance; its aesthetics; some scientists

think that certain relationships are so appealing that they must be correct. [25]

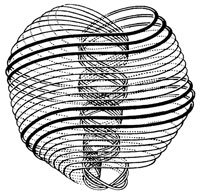

Interestingly, in the esoteric tradition, as represented by Charles Leadbeater,

Annie Besant, and the Theosophists in the book Occult Chemistry (1919), the

most fundamental particles were described as positive and negative stringed

vortices of energy, called “Anu”; the “ultimate

atom”. The word Anu is Sanskrit for atom or molecule,

and a title of Brahma. Needless to say, this concept of stringed vortices was not

the product of advanced mathematics.

“Anu”; the

“ultimate atom” [26]

The hydrogen atom was said to consist of 18 Anu units; 9 positively charged, and 9 negatively charged

(antiparticles). Contemporary Anu proponents suppose

the positive and negative spiral allow a transfer of energy to and from the

zero point field [27]

These

purported structures would

correspond to the hypothetical constituents of quarks, given the “Russian doll”

nature of matter. [28] In 1974, physicists Jogesh Pati and Abdus Salam speculated

that a small family of particles they called preons

could explain the proliferation of quarks and leptons.

Although

not currently in favor with many physicists, the preon

idea has not been ruled out. In 1999, Johan Hansson and

his coworkers proposed that three types of preons

would suffice to build all the known quarks and leptons. [29]

The alternative physics community has developed a mathematical concept strikingly similar to the Anu concept: B.G. Sidharth, of the Centre for Applicable Mathematics & Computer Sciences in India, writes: “The physical picture is now clear: A particle can be pictured as a fluid vortex which is steadily circulating along a ring (or in three dimensions, a spherical shell) with radius equal to the Compton wavelength and with velocity equal to that of light.” [30] The topic is quantum black holes, the name is the Compton Radius Vortex, described as another recent electron model by Richard Gauthier. [31]

Dissolution

of the Sensate

World View

A Grand Unified Theory (GUT), as

opposed to a TOE, does not include gravity in its definition. Physicists

willing to avoid unification of gravity see in Quantum Mechanics, and

alternatively the Holographic Universe, and Zero Point Field, mathematics which

dissolves the material universe at one level, but then unifies it at a deeper

level. All three theories tend to produce similar results, account

for biological processes to varying degrees, are sympathetic with the idea of consciousness, accommodate “information” as a fundamental unit, and are ultimately related to one another.

Is there something in ZPF and HU

that could correspond to non-locality in QM?

They very likely may be thought of

as three mathematical “lenses”, each of which reveal different aspects of our reality.

Quantum Mechanics

Quantum Mechanics, based vigorously in mathematics, was developed in the 1920s, and has been highly successful at explaining many phenomena, including spectral lines, the Compton effect and the photo electric effect, where electromagnetic radiation (photons) causes a current of electrons. [32]

Multiple logically consistent mathematical

representations of Quantum

Mechanics help to cement it’s (mathematical) credibility.

[33]

Scientists went through a

crisis period in trying to determine what quantum mechanics meant to

macroscopic reality. Particles, electrons, quarks etc. – cannot be thought of

as "self-existent", as they pop into and out of existence in an

apparently random way.

Erwin Schrödinger, originator of

wave quantum mechanics, was not happy with Max Born's

statistical / probability interpretation of waves that became commonly accepted

in Quantum Theory. He believed waves were real, and the “particles” in

wave-particle duality were merely an artifact.

Werner Heisenberg, originator of matrix quantum

mechanics, argued that what was truly fundamental in

nature was not the particles themselves, but the symmetries, or patterns that

lay beyond them. These fundamental symmetries could be thought of as the

archetypes of matter and the ground of material existence. The particles

themselves would simply be the material realizations of those underlying

abstract symmetries. These abstract symmetries, normally only ascertainable

through mathematics,

could be taken as the scientific descendents of Plato’s ideal

forms. [34]

The dominant perspective resulting from what

many termed “quantum weirdness” was the

Renown quantum physicist Anton Zeilinger,

of U of Vienna, found that objects, not merely sub-atomic particles, exhibit

wave particle duality. To show this he used a Talbot Lau interferometer in a

variation on the double slit experiment to show that large and asymmetric

molecules up to 100 atoms, created an interference pattern with itself. ie.,

exhibited wave-object duality. Even molecules need some other influence to

settle them into a completed state of being. [35]

Further, non locality, or entanglement, has

been proven to be macroscopically physically real, and forces a reconsideration

of our most fundamental notions of space and causality. [36]

One

consequence of quantum entanglement is the recognized fact that there is no

such thing as an independent observer in quantum experiments.[37]

Spin is a property possessed by most subatomic particles,

and experimenters have long accepted that the spin of a particle will always be

found to point along whichever axis is chosen by the experimenter as his

reference, defined in practice by an electric or magnetic field. If the experimenter readjusts his apparatus to a different reference angle, he will find

that the spin will again point in the direction of the new reference angle. It is a

property which completely undermine any attempt to make sense of

the concept of direction in the quantum domain. [38]

Experimental

outcome is also

affected by the act of observation. Where there is a wave, when observed,

becomes a particle .

Zero Point Field

Quantum Mechanics and the Zero

Point Field are the most obviously related, as they are mutually interdependent

for their existence.

To quantum physicists attempting

to model the electron mathematically, the vacuum, or Zero Point Field was seen

as an annoyance which introduced infinities into their equations. In Paul

Davies words: “The presence of infinite terms in the theory is a warning flag

that something is wrong, but if the infinities never show up in an observable

quantity we can just ignore them and go ahead and compute.” [39]

The hidden mechanism which

prevents atomic collapse appears to be the Zero Point Field. In 1987, Hal Puthoff was able to demonstrate in a paper

published by Physical Review, that the stable state of matter depends on the

dynamic interchange of energy between the subatomic particles and the

sustaining Zero Point Energy field. [40]

In quantum field theory, the

individual particles are transient and insubstantial. The only fundamental

reality is the underlying entity- the Zero Point Field itself

. [41]

Interestingly, Timothy Boyer and

Hal Puthoff showed that if you take into account the

Zero Point Field, you don’t have to depend on Bohr's Quantum Mechanical model. One can show

mathematically that electrons loose and gain energy constantly from the ZPF in

dynamic equilibrium, balanced at exactly the right orbit. Electrons get their

energy to keep going because they are refueling by tapping into these

fluctuations of empty space

Puthoff showed that fluctuations

of the ZPF drive the motion of subatomic particles and that all the motion of

all the particles generates the ZPF.

Timothy Boyer showed that many

of the weird properties of subatomic matter which puzzled physicists and led to

the formulation of strange quantum rules could easily be accounted for in

classical physics, if you include the ZPF: uncertainty, wave-particle duality,

the fluctuating motion of particles all had to do with interaction of the ZPF

and matter. [42]

Holographic Universe?

Peter Russell has pointed out a

few of the paradoxes of light. In relativity theory, at the speed of light time

stops, which means for light there is no time whatsoever. Further, a photon can

traverse the entire universe without giving up any energy, which in effect says

for light there is no space. [43]

Light has other interesting properties, including the capability of producing

optical holograms.

The physics and physical process of

constructing a 3 dimensional hologram using two dimensional photographic plates

and coherent (laser) light sources is based on interference and

diffraction of light, and can be

“described by” complex Fourier mathematics.

William Tiller notes: “The entire basis of

holography is wave diffraction. Further, the resultant wave intensity

diffracted from any kind of direct space geometrical object can be shown to

arise from the modulus of the Fourier Transform for that geometrical

shape.” [44]

Vlatko Vedral notes that

in the invention of

optical holography, Dennis Gabor showed that two dimensions were

sufficient to store all the information about three dimensions. Three

dimensions are able to be represented due to light’s wave nature of forming

interference patterns. “Light carries an internal clock, and in the

interference patterns, the timing of the clock acts as the third dimension.“ [45]

The Fourier transform

itself, with both phase and amplitude information, can be used to create the optical

hologram, a process called Fourier transform holography. This means that the

physical process of coherent light interference patterns and the Fourier

transform are interchangeable, which in turn implies they are in some sense

identical. The physical process “is” the mathematical Fourier transformation.

Bohm postulates a “Quantum Potential” which acts

on an elementary particle, in addition to the conventional EM, strong, and weak

nuclear forces.

The Quantum Potential carries information

about the environment of the quantum particle and thus informs and effects its motion. Since the information in the Quantum

Potential is very detailed, the resulting particle trajectory appears chaotic

or indeterminate. Bohm’s causal interpretation

suggests that matter has orders that are closer to mind than to a simple

mechanical order.

Bohm made use of the idea of the optical

holograph to illustrate the concept of enfoldment of an implicate order,

a holofield where

all the states of the quantum are permanently coded. Observable reality emerges

from this field by constant unfolding of the “implicate order” into the

“explicate order:” These correspond to the holographic plate and holograph in

optical holography.

His holographic theory, developed between

1970 and 1980, yields numerical results that are identical to conventional QM,

but has not been examined in a serious way by the physics community.

The concept of a “holographic universe” has

been supported by the results of an investigation into gravity waves by a

German team. Their gravity wave detector had been plagued by an inexplicable

noise. According to a

researcher at Fermilab in

Holographic Brain

In a series of landmark

experiments in the 1920s, brain scientist Karl Lashley

found that no matter what portion of a rat's brain he removed he was unable to

eradicate its memory of how to perform complex tasks it had learned prior to surgery.

No one was able to come up with a mechanism that might explain this curious

"whole in every part" nature of memory storage. Then in the 1960s

Carl Pribram encountered the concept of holography

and realized he had found the explanation brain scientists had been looking

for. Pribram believes memories are encoded not in

neurons, or small groupings of neurons, but in patterns of nerve impulses that

crisscross the entire brain in the same way that patterns of laser light

interference crisscross the entire area of a piece of film containing a

holographic image. In other words, Pribram believes

the brain is itself a hologram.

The brain is able to translate

the avalanche of frequencies it receives via the senses (light, sound, etc)

into the concrete world of our perceptions. Encoding and decoding frequencies

is precisely what a hologram does best. Just as a hologram functions as a sort

of lens, a translating device able to convert an apparently meaningless blur of

frequencies into a coherent image, Pribram believes

the brain also comprises a lens and uses holographic principles to

mathematically convert the frequencies it receives through the senses into the

inner world of our perceptions. Pribram's theory, in

fact, has gained increasing support among neurophysiologists. [47]

Reality and

Knowledge reconsidered

Information

Logic, logical analysis and

mathematics only arose as a result of the development of writing: you write it,

then you can analyze it. Writing, logical analysis, and mathematics itself is

thus a development of increasing availability of information.

Claude Shannon saw that

modern human beings communicate through codes—strings of letters,

words, sentences, dots and dashes of telegraph messages, patterns of electrical

waves flowing down telephone lines. Information is a logical arrangement of

symbols, and those symbols, regardless of their meaning, can be translated into

the symbols of mathematics. From this,

In the

early 1950s, James Watson and Francis Crick discovered that genetic information

was transmitted through a four-digit code—the nucleotide bases designated A, C,

G, and T. Biologists and geneticists began to draw on

So,

once again we have gone full circle, back to the Greek idea of the smallest indivisible

unit, this time with out mass, but still not knowing what it even is.

Mathematical Correspondences

Mathematics not

only describes, predicts, dissolves, and unifies the material universe.

Intriguing correspondences

between nature and mathematics continue to be discovered, whose

significance is not yet, and may never be, understood.

For example, Georg F.B. Riemann

added an improvement to an early formula for determining prime numbers which

gives the “steps” we see in the actual distribution of prime numbers. The

improvement consisted of adding waves at certain frequencies. Rieman’s guess of frequency values needed is called “Rieman’s hypothesis”, and also “the music of the primes” as

well as the “zeros of the Rieman Zeta function”.

These waves are the key to the successful prediction of prime numbers. Quantum

systems have discrete energy levels, corresponding to waves vibrating at

certain frequencies. Likewise the distribution of prime numbers is encoded in a

discrete set of wave frequencies: the “magic frequencies” Amazingly, Rieman’s frequencies look like the frequencies of a

“quantum chaotic system”.

There is some

undiscovered chaotic system whose quantum counterpart would hold the secret to

the music of the primes. Chaos, atoms, and prime numbers all connected. The Prime numbers of our

mental world are connected to the atoms of reality, and the link between them

is chaos. [48]

Although

mathematics can describe, predict, dissolve, and unify aspects of our material

universe, or offer tantalizing hints of connection, some mathematics appears to

offer no connection to the material universe.

We have

observed polar views of reality and knowledge. Reality can be viewed as

physical or metaphysical; knowledge can be viewed as absolute or relative. Can

these polarities be resolved?

Goldman has noted that science, as

opposed to math, has a historicity; that is, it changes iteratively and

inevitably through time.

Quantum

Mechanics, the Holographic Universe, and the Zero Point Field show how the

physical world dissolves into what might be called metaphysics at the level of the

Planck length.

This dissolution is

paralleled by the diversity of theories within the scientific community which

address problems in various levels of complexity in a description of “reality,”

which play out as if the human species has found itself on a featureless plain,

where all directions point equally to truth and falsity.

What

about knowledge?

As Steven Goldman points out, [49]

Immanuel Kant’s 1871 Critique of Pure Reason performed a Copernican revolution

on the concept of knowledge. In the old view, knowledge results when mind takes

in information from the senses about what is “out there” as experience. In Kant’s view, experience,

including math, is

constructed by the mind, and there is no direct knowledge or experience of anything “out there”.

Kant’s philosophy is a further

development of the earlier notion of primary and secondary sensations, in which secondary

sensations, such as color, taste, and odor

are produced by our sensory apparatus from the “powers” of the primary

sensations of size motion and shape which really are “out there”

Kant’s view is remarkable

consistent with our understanding of quantum mechanics, as well as the concept

of a holographic universe.

Kurt Gödel’s

Incompleteness Theorem

complements Kant’s philosophy in rendering not only reality, but our mind as

unknowable. This theorem states that every formal system contains, at any given

time, more true statements than it can possibly prove according to its own

defining set of rules. This theorem is normally applied to math, traditionally

accepted as being the most complete and universal form of knowledge, and shows that all

logical systems of any complexity are, by definition, incomplete.

Gödel's

Theorem … has been taken to imply that human beings will never entirely

understand the mind, since the mind, like any other closed system, can only be

sure of what it knows about itself by relying on what it knows about itself. Although

this theorem can be stated and proved in a rigorously mathematical way, what it

seems to say is that rational thought can never penetrate to the final ultimate

truth. [50]

Going

back to Sorokin’s conceptions of reality, we see that contemporary science has

allowed us an “idealistic” mentality, as we see that reality at the everyday macro

level has sensory qualities, while at deeper levels it has supersensory or

metaphysical aspects.